When most ed-tech platforms say they're "adaptive," they mean this: questions are tagged as easy, medium, or hard. Get three easy ones right and the system promotes you to medium. Get three medium ones wrong and it drops you back to easy. That's not adaptation — that's a simple state machine with three states.

Item Response Theory (IRT) is a psychometric framework that does something more precise: it estimates the probability that a specific student will correctly answer a specific question, based on both the student's measured ability and the item's statistical properties. It's the method behind the GRE, the SAT, and many national standardised assessments. We adapted it for primary maths — and the difference in what we can tell teachers is substantial.

The Problem With Difficulty Bands

Difficulty-band systems treat all students in the same band as equivalent and all questions in the same band as interchangeable. Neither is true.

A question tagged "medium" because it involves two-digit multiplication might be trivial for a student who has memorised their times tables and is simply asked to apply a standard algorithm. The same question might be genuinely hard for a student who struggles with the 7× table specifically, even though they're perfectly competent at everything else at the same "medium" level.

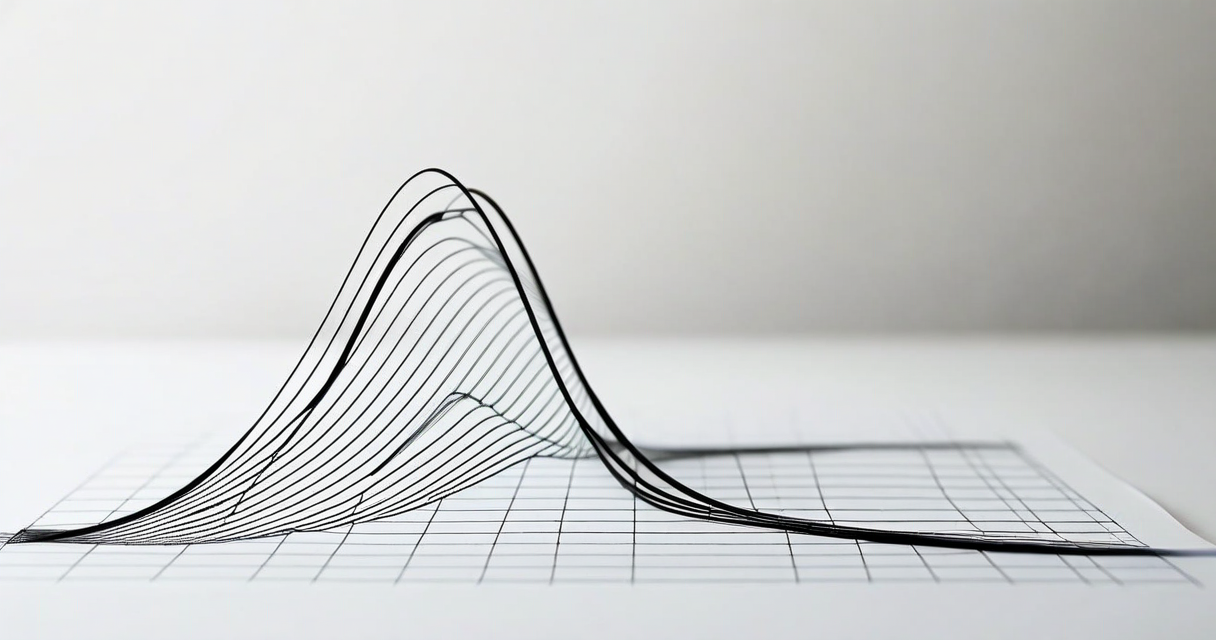

Difficulty bands flatten this variation. IRT doesn't. It treats each item as having its own statistical properties — including discrimination (how well the item separates students of different abilities), difficulty (the ability level at which a student has a 50% chance of answering correctly), and guessing probability (for multiple-choice questions, the likelihood of a correct answer from a student with near-zero relevant knowledge).

The Three-Parameter IRT Model

The version of IRT we use is the three-parameter logistic model (3PL), which is standard in high-stakes assessments. For each item, the model defines three parameters:

a (discrimination): How sharply the item distinguishes between students just below and just above the difficulty threshold. A high-discrimination item gives very different probabilities of success to students at ability 1.8 vs. ability 2.2. A low-discrimination item doesn't tell you much about where a student sits.

b (difficulty): The ability level at which a student has a 50% chance of success, net of guessing. A question with b = 2.0 is harder than one with b = 1.0, where ability is measured on a standardised scale.

c (guessing/pseudo-chance): The lower asymptote — the probability that a student with effectively zero relevant ability will still answer correctly (relevant for multiple-choice items). For open-answer questions, this parameter is typically near zero.

Taken together, these three parameters produce a sigmoid curve — the Item Characteristic Curve (ICC) — that describes exactly how the probability of a correct answer changes as student ability increases. This is far more informative than a three-label system.

Calibrating Items From Response Data

IRT parameters aren't assigned manually — they're estimated from real response data. During our pilot with 340 students across four primary schools in South London, each question was treated as a calibration item: the correct/incorrect responses from all students, combined with our initial estimates of student ability, fed a maximum-likelihood estimation process that converged on item parameter estimates for each question in our library.

This calibration process requires a minimum number of responses per item to produce stable estimates. For primary-level maths facts — questions with relatively stable difficulty across students — we found 200 responses per item sufficient for b-parameter estimates within ±0.1 on the logit scale. Discrimination estimates required more responses, and our a-parameters for newer items carry wider confidence intervals until we accumulate more data.

The practical implication: our oldest items (present since the platform launched) have well-calibrated parameters. New items added to the library carry provisional parameters that update as student responses accumulate. We're transparent with schools about which items are in calibration and which are fully validated.

How We Estimate Student Ability in Real Time

Once items have calibrated parameters, estimating student ability from a response sequence is a matter of finding the ability value that maximises the likelihood of the observed response pattern. For a student who answered 14 out of 20 questions correctly, but whose correct answers were concentrated on higher-b items and whose errors clustered on mid-b items, the ability estimate will be different from a student with the same raw score who missed only the hardest items.

We use a variant of Computerised Adaptive Testing (CAT) to select the next question. After each response, we update the student's ability estimate using a Bayesian Expected A Posteriori (EAP) approach. The next item is then selected to maximise Fisher information at the current ability estimate — which means presenting the item whose ICC passes through the 50%-correct point closest to the student's estimated ability. This is the question most likely to move our estimate of where the student sits.

In practice, this means students who are answering well see progressively harder questions, while students who are struggling see progressively easier ones — but the transitions are continuous and individualised, not stepped across three discrete bands.

What This Looks Like in the Teacher Dashboard

We translate IRT ability estimates into a percentile score that teachers can interpret intuitively. A student at the 45th percentile is performing at roughly average for their year group in our normed sample. A student at the 80th percentile is performing above four-fifths of their peers.

The dashboard also shows standard error of measurement (SEM) for each student's ability estimate — because a score of 65th percentile with a SEM of ±3 percentile points means something different from a score of 65th percentile with a SEM of ±12 percentile points. The latter student has answered too few questions for a confident estimate; the system flags this and prompts teachers to schedule an additional session.

We deliberately avoided displaying raw IRT logit scores (which range from roughly -3 to +3 and are meaningless to most non-specialists). The underlying mathematics is sophisticated; the interface should not be.

Limitations and What We Don't Claim

IRT is not magic. There are meaningful limitations that any honest description of the system should acknowledge.

First, IRT assumes that a single underlying ability trait explains performance on all items in the domain. For multiplication facts, this is a reasonable simplification — but maths is multi-dimensional, and a student's IRT score on our multiplication module is not a general maths ability estimate. We make no claim that it predicts performance on geometry or data handling.

Second, IRT estimates are only as good as the sample used to calibrate items. Our pilot sample is a convenience sample of UK primary schools in South London — not a nationally representative sample. Our norms are provisional and will improve as we accumulate data from a broader geographic and demographic range of schools.

Third, response time is not currently part of our IRT model. We use latency as a separate signal for fluency, but we don't incorporate it into ability estimation. This is a known limitation: a student who takes 8 seconds to answer 7 × 6 correctly has a different knowledge state from a student who answers in 1.2 seconds. We're actively researching response-time IRT models, and expect to incorporate them in a future release.

Why This Matters for Teachers, Not Just Researchers

Most primary teachers don't care about maximum likelihood estimation or ICC curves. They care about which students need more help and with what. IRT helps answer that question more accurately than difficulty bands do.

The practical consequence for teachers: the platform's recommendation for which student to pull aside for targeted support is based on a statistically grounded estimate of where each student sits, not on a crude count of how many questions they got right. A student who got 8 out of 10 questions right but consistently guessed on the higher-difficulty items looks different from a student who got the same score by reliably answering mid-difficulty questions accurately.

That distinction matters for intervention. The first student probably needs harder practice to confirm whether their high-difficulty answers were genuine or lucky. The second student might be ready to move on. A difficulty-band system would treat them identically. IRT doesn't.

Where We're Heading

We're currently piloting an extension of the IRT engine to cover addition and subtraction fluency for Years 1 and 2 — a prerequisite for meaningful multiplication progress. The item calibration work for that module will run through summer term 2025, with a release planned for September.

We're also in early discussions with the UCL Institute of Education about a collaborative validation study to compare MTC outcomes for students using Everybody Counts against a control group using standard classroom practice. That study would, if it proceeds, provide the first externally validated effectiveness evidence for the platform — which matters for schools making procurement decisions and for us in making honest claims about what the system delivers.